Technology

Google AI can rate photos based on aesthetic appeal

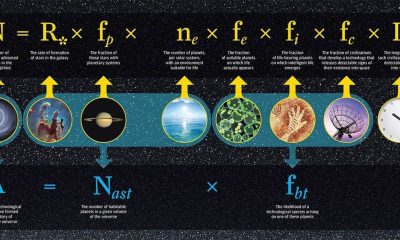

Some sceneries are lovely because they tug at human feelings, something that machines lack. Different landscapes, desert dunes particularly, appear to be nudes to robot eyes. Google introduced a neural image assessment to discern out the most aesthetically attractive photos.

The assessment uses a deep neural network trained with information labeled by way of the human. It’s been trained to expecting what photographs an ordinary user might rate as technically good-looking or aesthetically appealing.

Nvidia turn out to be the smartest company of 2017

A/C to Google, it is able to potentially be used to intelligently photograph edit, increase visual quality, or edit out perceived visual mistakes in a picture. Edits contain recommendations for optimal level of brightness, highlights, and shadows, that is similar to Adobe’s AI tools it showcased back in October.

/cdn.vox-cdn.com/uploads/chorus_image/image/58074521/Screen_Shot_2017_12_21_at_1.48.45_PM.0.png)

The Google assessment draws upon reference photos if available, but if not, it uses statistical models to predict photo quality. The intention is to get a pleasant score that will match up to human perception, even though the photo is distorted. Google has determined that the ratings granted by means of the assessment are just like ratings given by way of human raters.

The enterprise hopes that AI may be able to help users sort through the best photographs of many, or provide actual-time feedback on photography. But for now, these models remain in-house as proofs of concept posted in a Cornell research paper.

You may like

Technology

The Case for Custom eLearning Platforms: Why Organizations Are Making the Switch

The corporate eLearning market has exploded in recent years, growing over 800% since 2000. As the demand for eLearning continues to accelerate, more and more organizations are finding that off-the-shelf solutions cannot keep pace with their training needs. This has led many companies to make the switch to custom-built eLearning platforms tailored specifically for their requirements.

There are several key reasons driving the demand for customized eLearning tools:

Greater Flexibility and Scalability

Generic eLearning software packages often impose rigid constraints that limit their ability to adapt to an organization’s evolving needs. Meanwhile, the “one-size-fits-all” approach fails to support the personalized learning critical for employee development. Custom platforms provide flexibility to add and modify features to match ever-changing business goals. As companies scale training across global workforces, custom solutions built on cloud infrastructure can scale seamlessly to handle growing demand.

Deeper Integration Across Systems

Smooth integration with existing HR, LMS, and other business systems is critical for optimizing training workflows. However, off-the-shelf tools rarely integrate well, creating data and process siloes. Custom platforms can tightly integrate role-based learning paths with core business applications, sync user profiles, enable single sign-on, and more. This level of integration catalyzes more impactful training function.

Better Data and Analytics

Generic software severely limits access to data insights that drive improvement. Custom platforms unlock a trove of analytics on content consumption, learner progression, platform adoption, and real-time feedback. Integrated analytics dashboards and APIs allow businesses to derive deep visibility across the learner lifecycle. These insights help continuously enhance learner experience, target development gaps, and demonstrate direct training ROI.

Enhanced Learner Engagement

For modern learners accustomed to consumer-grade digital experiences, poor platform usability quickly erodes engagement. Custom designs allow companies to incorporate familiar features from popular apps and websites while optimizing for their audience. Adaptive learning approaches further personalize content to individual styles and needs. With modular component architecture, custom platforms stay on the cutting edge of new modalities like AR/ VR to captivate learners.

Brand and Culture Alignment

Off-the-shelf tools impose a generic and often disruptive experience that clashes with existing brand identity and culture. In contrast, custom platforms allow organizations to carry over familiar styling, voice, and workflow patterns. Consistency in experience preserves brand recognition while smoother onboarding leads to wider adoption across all employee groups. Over time, the platform can evolve alongside cultural changes as well.

While custom elearning tools require greater upfront investment, for enterprise training needs, the long-term benefits far outweigh the costs. The ability to mold platforms to current and future needs results in greater leverage from learning spend.

As businesses demand ever-more from their learning technology, custom solutions provide the agility needed for true scale. Rather than forcing training functions into the constraints of generic software, custom elearning development keeps the focus on nurturing talent and capabilities. For any organization looking to drive workforce transformation through learning, custom elearning represents the way forward.

Technology

Pintarnya raises $16.7M to power jobs and financial services in Indonesia

Pintarnya, an Indonesian employment platform that goes beyond job matching by offering financial services along with full-time and side-gig opportunities, said it has raised a $16.7 million Series A round.

The funding was led by Square Peg with participation from existing investors Vertex Venture Southeast Asia & India and East Ventures.

Ghirish Pokardas, Nelly Nurmalasari, and Henry Hendrawan founded Pintarnya in 2022 to tackle two of the biggest challenges Indonesians face daily: earning enough and borrowing responsibly.

“Traditionally, mass workers in Indonesia find jobs offline through job fairs or word of mouth, with employers buried in paper applications and candidates rarely hearing back. For borrowing, their options are often limited to family/friend or predatory lenders with harsh collection practices,” Henry Hendrawan, co-founder of Pintarnya, told TechCrunch. “We digitize job matching with AI to make hiring faster and we provide workers with safer, healthier lending options — designed around what they can reasonably afford, rather than pushing them deeper into debt.”

Around 59% of Indonesia’s 150 million workforce is employed in the informal sector, highlighting the difficulties these workers encounter in accessing formal financial services because they lack verifiable income and official employment documentation.

Pintarnya tackles this challenge by partnering with asset-backed lenders to offer secured loans, using collateral such as gold, electronics, or vehicles, Hendrawan added.

Since its seed funding in 2022, the platform currently serves over 10 million job seeker users and 40,000 employers nationwide. Its revenue has increased almost fivefold year-over-year and expects to reach break-even by the end of the year, Hendrawn noted. Pintarnya primarily serves users aged 21 to 40, most of whom have a high school education or a diploma below university level. The startup aims to focus on this underserved segment, given the large population of blue-collar and informal workers in Indonesia.

Techcrunch event

San Francisco

|

October 27-29, 2025

“Through the journey of building employment services, we discovered that our users needed more than just jobs — they needed access to financial services that traditional banks couldn’t provide,” said Hendrawan. “We digitize job matching with AI to make hiring faster and we provide workers with safer, healthier lending options — designed around what they can reasonably afford, rather than pushing them deeper into debt.”

While Indonesia already has job platforms like JobStreet, Kalibrr, and Glints, these primarily cater to white-collar roles, which represent only a small portion of the workforce, according to Hendrawan. Pintarnya’s platform is designed specifically for blue-collar workers, offering tailored experiences such as quick-apply options for walk-in interviews, affordable e-learning on relevant skills, in-app opportunities for supplemental income, and seamless connections to financial services like loans.

The same trend is evident in Indonesia’s fintech sector, which similarly caters to white-collar or upper-middle-class consumers. Conventional credit scoring models for loans, which rely on steady monthly income and bank account activity, often leave blue-collar workers overlooked by existing fintech providers, Hendrawan explained.

When asked about which fintech services are most in demand, Hendrawan mentioned, “Given their employment status, lending is the most in-demand financial service for Pintarnya’s users today. We are planning to ‘graduate’ them to micro-savings and investments down the road through innovative products with our partners.”

The new funding will enable Pintarnya to strengthen its platform technology and broaden its financial service offerings through strategic partnerships. With most Indonesian workers employed in blue-collar and informal sectors, the co-founders see substantial growth opportunities in the local market. Leveraging their extensive experience in managing businesses across Southeast Asia, they are also open to exploring regional expansion when the timing is right.

“Our vision is for Pintarnya to be the everyday companion that empowers Indonesians to not only make ends meet today, but also plan, grow, and upgrade their lives tomorrow … In five years, we see Pintarnya as the go-to super app for Indonesia’s workers, not just for earning income, but as a trusted partner throughout their life journey,” Hendrawan said. “We want to be the first stop when someone is looking for work, a place that helps them upgrade their skills, and a reliable guide as they make financial decisions.”

Technology

OpenAI warns against SPVs and other ‘unauthorized’ investments

In a new blog post, OpenAI warns against “unauthorized opportunities to gain exposure to OpenAI through a variety of means,” including special purpose vehicles, known as SPVs.

“We urge you to be careful if you are contacted by a firm that purports to have access to OpenAI, including through the sale of an SPV interest with exposure to OpenAI equity,” the company writes. The blog post acknowledges that “not every offer of OpenAI equity […] is problematic” but says firms may be “attempting to circumvent our transfer restrictions.”

“If so, the sale will not be recognized and carry no economic value to you,” OpenAI says.

Investors have increasingly used SPVs (which pool money for one-off investments) as a way to buy into hot AI startups, prompting other VCs to criticize them as a vehicle for “tourist chumps.”

Business Insider reports that OpenAI isn’t the only major AI company looking to crack down on SPVs, with Anthropic reportedly telling Menlo Ventures it must use its own capital, not an SPV, to invest in an upcoming round.

Latest

Ahead of NBA Finals, NYC Reverses Ban on Knicks Watch Party Outside Madison Square Garden

The decision came on Wednesday as the Knicks prepared for Game 1 of their series against the Spurs for the...

Oracle (ORCL) Stock Sinks As Market Gains: Here’s Why

In the latest close session, Oracle (ORCL) was down 1.44% at $244.58. The stock fell short of the S&P 500,...

Blue Origin Issues Official Statement on New Glenn Explosion

On May 28th, at Launch Complex-36 A (LC-36A), located at the Cape Canaveral Space Force Station (CCSFS) in Florida, Blue...

Recipients need to prove they work or volunteer – NBC4 Washington

Big changes are coming to a federal program that has been helping low-income families pay for food for generations. The...

Taylor Swift’s former friend Karlie Kloss scores wedding invite after rumored feud: report

Taylor Swift’s highly discussed guest list for her upcoming wedding will now apparently include one debated friend who reportedly was...

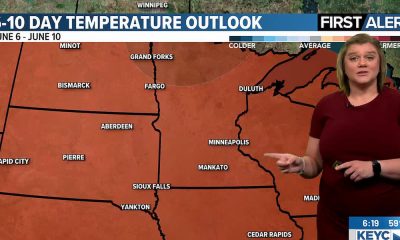

Quiet start to the week; rain, heat, humidity return by this weekend

Conditions will be warm and quiet to kick off the work week before shower and thunderstorm chances ramp up bringing...

Actor Nick Pasqual sentenced to 32 years to life for brutal stabbing

Nick Pasqual, an actor who appeared in “How I Met Your Mother,” has been sentenced to 32 years to life...

Jennifer Lopez drops her age-defying nighttime skincare routine

Page Six may be compensated and/or receive an affiliate commission if you click or buy through our links. Featured pricing...

International Friendly LIVE: Wales vs Ghana – score, TV coverage, radio commentary, text updates & match stats

Team news – Ampadu and Moore return as Wales make four changespublished at 19:04 BST 19:04 BST Wales v Ghana...

Iran War Live Updates: Israel Strikes Southern Lebanon After Pulling Back From Threat to Beirut

Under pressure from President Trump, Prime Minister Benjamin Netanyahu of Israel held off from attacking Beirut. But he vowed to...

Stripping U.S. citizenship for some is harder than Trump vowed : NPR

Illustration by Hanna Barczyk Stay up to date with our Politics newsletter, sent weekly. The Trump administration has vowed to...

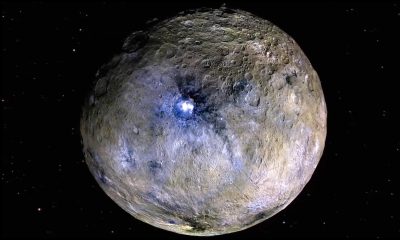

Ceres’ Surface Is Much More Complex Than Previously Thought

The long, puzzling dwarf planet Ceres, in reality the first named asteroid, has surface features that are much more complex...

Black Crowes singer Chris Robinson booed after mocking Florida fans’ ‘USA’ chant

Black Crowes singer Chris Robinson was booed after mocking fans for a “USA” chant at a Florida show — then...

Dallas police search for missing 9-year-old boy

Dallas Police Department Dallas police are searching for a missing 9-year-old boy last seen on Monday evening. Dallas PD said...

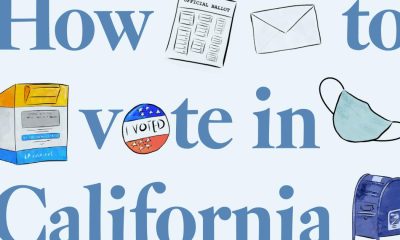

In-person voting in California: Your last-minute guide

To vote in person, Californians, you have until Tuesday at 8 p.m. to get to a voting center and cast...

Nancy Guthrie targeted by worker who knew of Savannah, expert says

NEWYou can now listen to Fox News articles! LAS VEGAS — A leading forensic scientist who spent decades with one...

How to watch ‘Love Island UK’ Season 13 live in the US: Time, cast

Page Six may be compensated and/or receive an affiliate commission if you click or buy through our links. Featured pricing...

What to Watch For in the NY Primary: Key Races and Why They Matter

Congressional primaries in Manhattan, Brooklyn and beyond could test the strength of Mayor Zohran Mamdani’s socialist movement and reverberate across...

Charli xcx New Album Reveals Title, Release Date, Scorsese on Cover

Charli xcx‘s new era is officially upon us. The “Brat” singer revealed on Monday that her seventh studio album is...

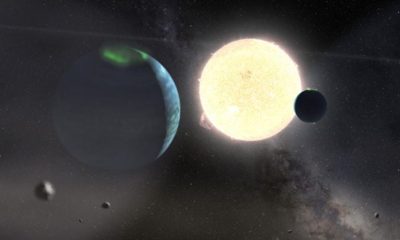

Longest-period young transiting exoplanets discovered

It’s 2234, you’re on your annual class field trip touring exoplanets, and your teacher informs everyone they can pick one...

University of Arizona’s school population is shrinking, but it’s on purpose

The University of Arizona is getting smaller — and school officials say that’s on purpose. The first-year class starting in...

‘Euphoria’ ending explained: Rue’s fate

Warning: Spoilers ahead! Do not proceed unless you’ve watched the Season 3 finale of Euphoria.” Move over, “Game of Thrones”...

California statewide voter guide: Insurance commissioner, controller and more

Much of California has been focused on the wild race to replace Gavin Newsom as governor. But voters will also...

Live score updates for Texas Tech softball vs UCLA in Women’s College World Series

OKLAHOMA CITY — The Texas Tech softball team needs to score a win over UCLA today to avoid elimination from...

Orioles beat Blue Jays behind ninth-inning comeback

BALTIMORE — The Orioles have a belief they can win any game. They’ve said it. They’ve shown it. But at...

China’s Rise in Drug Development Looms Over U.S.

Clinical trials in China are getting attention at an international oncology gathering in Chicago. China’s surging biotechnology industry is fueling...

How that viral ‘Off Campus’ Jennifer Lopez costume came together

Page Six may be compensated and/or receive an affiliate commission if you click or buy through our links. Featured pricing...

NA ŻYWO: Iga Świątek – Marta Kostiuk w Roland Garros! Relacja i wynik live

Czterokrotna triumfatorka paryskiego Szlema imponuje od początku tegorocznej edycji. Świątek przeszła przez pierwsze trzy rundy bez straty seta, pewnie pokonując...

Lasers at the Lunar Poles Could Help Astronauts Navigate

A team of scientists is exploring ways to use dark craters at the lunar poles as sites for ultrastable lasers...

The Yankees are playing a dangerous game with Ryan Weathers

--> Ryan Weathers has not been the Yankees‘ best starting pitcher this season, but he’s arguably been the biggest surprise....

Tate McRae, Mindy Kaling, Jennifer Lopez, Olivia Rodrigo and more

Star snaps of the week: Tate McRae, Mindy Kaling, Jennifer Lopez, Olivia Rodrigo and more Kourtney Kardashian stocks up on...

Hollywood Walk of Fame killing: Family demands justice

The family of a Hollywood man is demanding justice after their loved one was attacked by a dog, beaten and...

Marlins At Mets: Bobby V And Mazzilli Enter The Mets Hall Of Fame On Saturday, With Beltrán To Follow In September

NEW YORK — The Miami Marlins (26-31) arrive at Citi Field on Friday for three games against the New York...

As Trump Mulls Decision About Iran War Deal, a Restive Middle East Waits to Hear

The president has wavered on whether to move ahead with an agreement with Iran to end the war. On Friday,...

WWE News, AEW News, Pro Wrestling Backstage News

Ric Flair alleges trademark infringement. On social media, Ric Flair said that someone is using his “Flair” trademark. He warned...

Vanilla Ice defends Freedom 250 concert amid performer exodus

Vanilla Ice is standing by the Freedom 250 concert celebrating America’s milestone birthday after several performers pulled out this week,...

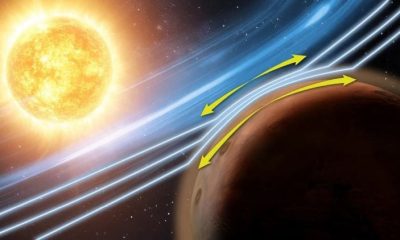

MAVEN Spacecraft Finds New Plasma Squeezing at Mars

A cloaked alien invasion force is approaching Earth and coming up on Mars. The first officer looks through a viewfinder...

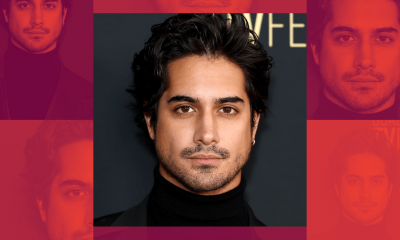

Backrooms Actor Avan Jogia Wants to Bring You Into His World

After two decades in the entertainment industry, Avan Jogia has definitely learned a thing or two. Getting his start in...

Pleas and political attacks fill the home stretch of California governor’s race

The top candidates for California governor crisscrossed the state Friday, all venturing to friendly political territory to woo voters and...

Pete Davidson praises Kim Kardashian in rare comments about his ex

The king of Staten Island has nothing but good things to say about the queen of the Kardashians. “Isn’t it...

WNBA betting preview: Why the Sparks-Mystics total is the play with Kelsey Plum sidelined tonight

Lost in the shuffle of the NHL and NBA Playoffs, and MLB labor talks is the fact that the WNBA...

Is Spencer Pratt for Real?

The former reality star’s dark visions of Los Angeles are resonating in a heated mayoral race, even if they’re far...

Boulder police use Flock to illegally surveil people, lawsuit alleges

The Boulder Police Department uses its fleet of Flock cameras to illegally surveil people without any probable cause, a class-action...

Martina McBride quits Freedom 250 festival meant to celebrate America’s birthday

Country star Martina McBride has dropped out of the Freedom 250 concert series one day after it was announced, claiming...

Man arrested for threats to kill Erika Kirk ahead of Turning Point USA event in San Antonio, affidavit says

SAN ANTONIO – A San Antonio man was arrested early Thursday after he was accused of threatening to kill Erika...

JWST Studies a Dark and Airless Super-Earth

There’s a planet out there called LHS 3844 b, orbiting a star about 48 light-years away. The Transiting Exoplanet Survey...

Lakers have two reasons not to pursue Thunder guard Lu Dort this summer

--> The Los Angeles Lakers will be on the prowl this offseason for the type of 3-and-D prowess that Luguentz...

Perris skydiving accident leaves one dead, one in critical condition

One person was killed and another was in critical condition following a skydiving accident in Perris on Thursday afternoon, authorities...

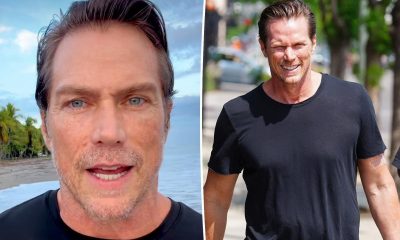

‘Sex and the City’ alum reveals surprising career move after coming out of hiding

And just like that, Jason Lewis is an author. The “Sex and the City” alum, 54, revealed he moved to...

ATP French Open Best Bets Including Stefanos Tsitsipas vs Matteo Arnaldi

The French Open second round on May 28 features several intriguing matchups between rising talents and experienced clay-court players. Adolfo...

Iran War Live Updates: U.S. and Iran Trade Strikes, Further Threatening Negotiations

The U.S. military said Iran had launched a ballistic missile toward Kuwait. Iran’s Revolutionary Guards said it had targeted an...

Champions League final: Why Paris St-Germain could have edge over Arsenal

Some of the numbers for individual players are eye-catching. Les Parisiens’ club captain Marquinhos has started 14 of those European...

Travis Kelce hints he and Taylor Swift are considering huge life change after highly anticipated wedding

Travis Kelce gave a sign that he and Taylor Swift are changing their last names after getting married. The NFL...

Astrophysical Calibration Could “Autotune” Gravitational Wave Detection

Ever since gravitational waves were first confirmed in 2017 by scientists at the Laser Interferometer Gravitational Wave Observatory (LIGO), over...

COMEDIAN JOSH JOHNSON TAKES ON HULK HOGAN, POLITICS EVOLVING INTO WWE AND MORE

COMEDIAN JOSH JOHNSON TAKES ON HULK HOGAN, POLITICS EVOLVING INTO WWE AND MORE By Mike Johnson on 2026-05-27 08:25:00 Comedian...

Late-season snow helps Mammoth Mountain extend skiing season

Following heavy April storms and recent low temperatures, Mammoth Mountain has extended its ski season through June 7. Mammoth Resorts...

Madison Beer Blushes While Getting Flirty With Justin Herbert As Chargers QB Crashes Pop Icon’s Live Q&A

A scene from Madison Beer’s concert soundcheck in Amsterdam for her “Locket Tour” went viral on X on Tuesday. While...

‘Summer House’ stars and more

That’s one way to mix fashion and technology. Celebrities like Maggie Gyllenhaal, Emma Roberts and Lourdes Leon stopped by Longchamp’s...

Uganda Closes Border With Congo Over Ebola Fears

Ebola response teams and a few others are exempt and will undergo “strict health screening,” a top Ugandan official said.

Vaibhav Sooryavanshi’s cool approach leaves Dasun Shanaka impressed: Haven’t seen a kid like that

At just 15, Vaibhav Sooryavanshi has already become one of the biggest stories of IPL 2026. His fearless six-hitting and...

NASA TESS Reveals Epic All-Sky Map of Distant Worlds

You’re on a camping trip with your family and your parents tell you to turn off all the lights. But,...

Houston weather: Storms possible overnight, Severe Thunderstorm Watch

HOUSTON – A Severe Thunderstorm Watch is in effect until 5 a.m. Wednesday for several Southeast Texas counties. The areas...

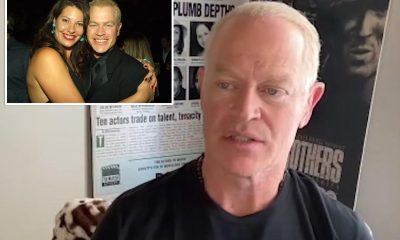

Neal McDonough says Hollywood labeled him a ‘religious nut,’ cost him his career and home

Neal McDonough is looking back on a dark moment in his life and career. During a recent interview with Fox News...

Spurs’ Mitch Johnson Finishes Third in Coach of the Year Voting

--> OKLAHOMA CITY — Mitch Johnson was in the running for Coach of the Year in his first full season...

Trump administration sues UCLA, alleging antisemitic environment festered

The Trump administration on Tuesday sued the University of California, alleging that UCLA is “deliberately indifferent” to antisemitic harassment of...

French Open Predictions Including Auger-Aliassime vs Altmaier

The first round of the French Open concludes on Day 3 as 20 more men’s matches take the grounds of...

Paige DeSorbo declared this $30 Amazon cardigan ‘perfect’

Page Six may be compensated and/or receive an affiliate commission if you click or buy through our links. Featured pricing...

SpaceX IPO Filing Reveals Favorable Terms for Elon Musk

The ways it set up its board and Mr. Musk’s pay appear to benefit him at the expense of other...

Here’s Why Micron (MU) is Among the 15 High Growth Stocks to Buy and Hold for the Next Decade

Micron Technology, Inc. (NASDAQ:MU) is one of the 15 High Growth Stocks to Buy and Hold for the Next Decade....

How Mars Can Help Us Understand ‘Marginal’ Exoplanets

Mars holds a special place in the Solar System. It represents marginal habitability. This means it transitioned from warm and...

Hollywood icon Sally Field reminds a fractured nation of the brilliance of the Constitution

Actress Sally Field used a recent television appearance to praise the First Amendment, reflecting on the importance of free speech in...

Charles Barkley gets into hysterical back-and-forth with Knicks star OG Anunoby about his ‘real name’

New York Knicks star OG Anunoby spoke with the “Inside the NBA” crew following his team’s blowout victory over the...

Southern California should get more of its water locally, groups say

A coalition of conservation groups wants Southern California to get 85% of its water locally, up from the 50% it...

Aaron Judge RBI drought hits career-long 10 games

NEW YORK — When Ben Rice launched his 16th home run of the season earlier this week, grabbing a share...

Toshifumi Suzuki, Who Made 7-Eleven a Giant in Japan, Dies at 93

He spent four decades building the convenience store chain into a cornerstone of daily life.

Jennifer Lopez busts a move in lace-up jeans from 2001 ‘Ain’t It Funny’ music video

Jennifer Lopez is giving us nostalgia served hot! The pop star, 56, slipped into the exact same jeans she wore...

SeaWorld Orlando expands Expedition Odyssey with new Arctic adventure

If you need help with the Public File, call (407) 291-6000 At WKMG, we are committed to informing and delighting...

A Brief-ish History of SETI. Part VII: Brief Windows and Transcendence

Welcome back to our continuing series on the Brief-ish History of SETI. In our previous installments, we looked at the...

BTS fans pack Las Vegas Chinatown as Allegiant Stadium shows begin

LAS VEGAS (FOX5) — As hundreds of thousands of fans of K-Pop group BTS head into Las Vegas for Memorial...

‘Euphoria’ kills off Jacob Elordi’s Nate Jacobs

He’s not feeling euphoric. Warning: Spoilers ahead! Do not proceed unless you’ve watched “Euphoria’s” seventh episode of Season 3. “Euphoria”...

Potential crack on Garden Grove chemical tank, reducing explosion risk

With evacuation shelters reaching capacity as more than 40,000 people were asked to leave their homes, officials laboring to prevent...

James Harden, Carmelo Anthony & the AI-Powered Hollywood Pivot

James Harden has spent more than a decade as one of basketball’s most mercurial superstars — an MVP, multiple-time scoring...

Rocket League On Unreal Engine 6 Announced At Paris Major

During the ongoing 2026 Rocket League Championship Series Paris Major, Epic Games and Psyonix delivered on a major announcement they’d...

Kyiv, Ukraine, Hit in Russian Missile Attack

Buildings rattled in the Ukrainian capital for hours early Sunday. Russia launched an Oreshnik intermediate-range ballistic missile for only the...

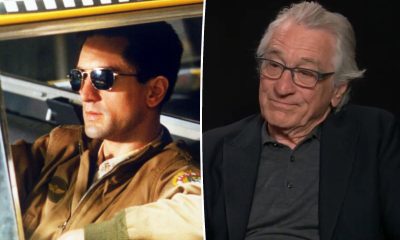

Robert De Niro had no idea ‘Taxi Driver’ would become a classic

You talkin’ to him? Robert De Niro had no idea his 1976 film “Taxi Driver” would be lauded as a...

Sabalenka, Swiatek, Svitolina… qui pour succéder à Coco Gauff à Roland-Garros ?

En vous abonnant, vous accédezà la source de référence sur l’actu sportive.En vous abonnant, vous accédez à la source de...

SpaceX’s Next-Gen Starship Passes Its First Flight Test Despite Snags

SpaceX’s next-generation Starship V3 rocket got off to a glorious start for its first test flight, and although not all...

Knicks made a Donovan Mitchell adjustment the Cavaliers have no answer for

--> Of the many adjustments the New York Knicks made in Game 1 against the Cleveland Cavaliers, the decision to...

Laura Clery praises first responders after being crushed by fridge

Comedian and social media star Laura Clery praised the first responders who helped her after she was pinned beneath a...

How will the Orange County chemical crisis be resolved? Here is what we know

• The temperature inside the failing tank has risen to 90 degrees, up from 77 a day earlier. The boiling...

The St. Louis Cardinals have an emerging star in Jordan Walker

On this day, 24 years ago, Jordan Walker was born. Happy Birthday! Young by baseball standards, it feels like we’ve...

Iran and U.S. Officials Signal Progress as Cease-Fire Hangs in Balance

As people across the Middle East braced for the possibility of renewed fighting, officials from both sides said there were...

SVU Is Doubling Down on 1 Beloved Character in Season 28

Law & Order: SVU Season 28 is set to bring back a fan-favorite character. The showrunner recently teased a major...

Scarlett Johansson says ‘there is no work-life balance’ in candid interview

Scarlett Johansson has finally admitted that the idea of a good work-life balance does not exist. During an interview with CBS Sunday...

Mars Fungi Could Make Red Planet Regolith Fertile for Crops

You’re on the fourth human mission to Mars, and you’ve been tasked with establishing the first self-sustaining food crop on...

Portland’s CityFair opens Friday with fireworks, food and family fun

PORTLAND, Ore. (KATU) — CityFair kicks off Friday evening at Portland Waterfront Park. KATU is the new home of the...

‘Housewives’ star Erika Girardi settles $25-million civil lawsuit

Pop crooner and “Real Housewives of Beverly Hills” star Erika Girardi quietly put an end to a long and splashy...

Yankees vs. Rays odds, prediction, line, time: 2026 MLB picks for May 22 from proven model in Gerrit Cole’s return

Gerrit Cole will make his 2026 season debut when the New York Yankees battle the American League East Division-rival Tampa...

Miley Cyrus wears vintage Versace dress for Hollywood Walk of Fame Star ceremony

Miley Cyrus wore a vintage Atelier Versace gown for her Hollywood Walk of Fame Star ceremony. John Salangsang/Shutterstock From one...

Move Over, Private Equity. It’s Great to Be a Banker Again.

They played second fiddle to private equity and hedge funds for years, but 2026 is shaping up to be “the...

Entertainment

‘Supergirl’ actress Milly Alcock mocks critics, says a lot of them are Christian dads

New ‘Supergirl’ star Milly Alcock pushed back against online critics ahead of the film’s release, saying many of the people criticizing...

Blake Lively and Ryan Reynolds are $2M deep in contractor debt

Blake Lively and Ryan Reynolds are staring down more than $2.1 million in unpaid contractor debt tied to their sprawling...

‘Top Gun’ actor Miles Teller defends his reputation after self-imposed, decade-long media ban from ‘mishandled’ profile

Miles Teller attempted to reclaim a negative narrative after being labeled “kind of a d–k” more than one decade ago. The...

Blake Lively, Olivia Wilde, more celebrities at the Fendi Baguette Re-Edition party

In the words of Carrie Bradshaw: It’s not a bag, it’s a Baguette. In the years since it first hit...

Jon Stewart surprises Stephen Colbert with a surprise serenade from Andra Day

“The Daily Show” host Jon Stewart was one of Stephen Colbert’s final guests as “The Late Show” continues its final...

Meryl Streep and Martin Short prove romance is still going strong with London dinner date

It’s [not] complicated. Meryl Streep and Martin Short’s romance was proven to be still going strong after they were photographed...

John Travolta reacts to attention his viral Cannes beret looks have gotten

John Travolta explained his bold looks at the 2026 Cannes Film Festival, particularly, his choice to rock different colored berets....

Shakira gets massive 8-figure payout after acquittal in tax fraud case

Shakira has been acquitted in her tax fraud case after an “eight-year ordeal” — and she’s receiving a massive payout...

Shania Twain, Kacey Musgraves, more

Yee-haw! Country music’s biggest stars stepped out Sunday night for the 61st Academy of Country Music Awards. Hosted by Shania...

Julianne Moore slammed for saying she avoids movies with ‘explosions and guns’

Julianne Moore ruffled some feathers online after saying she doesn’t like movies with “explosions and guns.” During a recent interview...

Kevin Jonas shows off shocking shredded physique in gym video

Kevin Jonas’ wife, Danielle Jonas, is burnin’ up for his ripped new bod. Danielle shared video of Kevin, 38, working...

Taylor Swift and Travis Kelce enjoy dinner date in NYC

Taylor Swift and fiancé Travis Kelce were pictured enjoying a date night in New York City on Friday, ahead of...

Anna Wintour’s daughter Bee Shaffer and Franco Carrozzini split

Bee Shaffer and her husband, Francesco Carrozzini, have split after nearly eight years of marriage, Page Six can reveal. The...

Why stars are flocking back to ‘outdated’ TV dramas

Like vinyl, broadcast TV may be en vogue again. Amid Hollywood contraction, old-school network jobs are suddenly sought after —...

Kyle Richards says these under-$20 sunglasses are ‘giving Tom Ford’

Page Six may be compensated and/or receive an affiliate commission if you click or buy through our links. Featured pricing...

‘Squatters’ host Flash Shelton slams broken system that hands intruders the keys to your home

He thought it would be a simple call to law enforcement. Instead, when intruders took over his late father’s home...

Michael Che pulled out of Kevin Hart roast before shading white writers for racist jokes

Michael Che spoke out about the controversial jokes made on “The Roast of Kevin Hart” after pulling out of the...

‘The Americans’ star Matthew Rhys slams ‘out of control’ trend of playing videos out loud in public

Matthew Rhys is sounding off on the public behavior driving him “bananas.” “The Americans” star isn’t hiding his irritation with...

Meghan Markle pairs $63K diamond necklace with white T-shirt in new As Ever promo pics

Meghan Markle rocked a five-figure diamond necklace alongside a white tee in new As Ever promo pics, shared Monday. As...

‘Survivor’ host Jeff Probst’s brother dies

“Survivor” host Jeff Probst has lost a beloved member of his tribe. One of the television personality’s brothers, Brent, announced...

Tom Brady savagely drags Kevin Hart’s affair into revenge roast: ‘F–k it’

Tom Brady got his revenge on Kevin Hart during Sunday’s Netflix roast. The retired NFL player dragged the comedian’s 2017...

Return of the Jedi’ actor dead at 82

Michael Pennington, who had a role in “Star Wars: Episode VI: Return of the Jedi,” has died. The actor passed...

Justin Theroux’s unrecognizable ‘Devil Wears Prada 2’ role baffles viewers

One actor who appears in the new sequel to “The Devil Wears Prada” appears so unrecognizable in the film that...

Carrie Underwood responds to Nikki Glaser feud rumors

Carrie Underwood promises there is no bad blood between her and Nikki Glaser. Last month, online chatter revved up after...

‘Summer House’ star Ciara Miller accuses West Wilson of sleeping with ‘RHONJ’ alum Jennifer Fessler

Ciara Miller accused her ex West Wilson of sleeping with “Real Housewives of New Jersey” alum Jennifer Fessler after the...

Heidi Klum worried Anna Wintour wouldn’t approve her Met Gala 2026 prosthetics

Heidi Klum brought the Halloween spirit to her Met Gala look. Dressing as an actual marble statue — prosthetics and...

Jerry Seinfeld claims ‘Friends’ copied his sitcom ‘Seinfeld’

Jerry Seinfeld thew a little shade at “Friends” during at the 2026 Netflix Is a Joke Festival, claiming the show...

Kylie Jenner carries $63K blue Birkin to Knicks playoff game with Timothée Chalamet

She’s keeping up with the Knicks. Following Monday night’s Met Gala, Kylie Jenner and boyfriend Timothée Chalamet were once again...

Christina Ricci leaves shady comment on Katy Perry Met Gala photo

Christina Ricci left a savage — but succinct — comment on an Instagram carousel of pics featuring Katy Perry and...

Kylie Jenner describes ‘crazy’ mushrooms trip that left her in tears

Kylie Jenner once had a “crazy” magic mushroom trip — in public — that left her in tears. The “Kardashians”...

Blue Ivy ignores requests to remove sunglasses at Met Gala

Blue Ivy’s Met Gala debut didn’t go off quite as smoothly as it appeared, as dad Jay-Z seemingly pleaded with...

The real $60M winners from Blake Lively, Justin Baldoni’s career immolation — and whether either can ever come back

After 18 months of bare-knuckle brawling, Blake Lively and Justin Baldoni’s legal saga ended with a whimper. The two sides settled their claims...

Beyoncé is a sparkly skeleton on the Met Gala 2026 red carpet with Blue Ivy and Jay-Z

Beyoncé walked the Met Gala red carpet alongside Jay-Z and Blue Ivy Getty Images Beyoncé’s latest look is anything but...

Princess Eugenie reveals she’s pregnant with third child in return to Instagram

Princess Eugenie is pregnant! The royal shared she is pregnant with her and husband Jack Brooksbank’s third child on her...

Kylie Jenner, Timothée Chalamet join Kim Kardashian and mom Kris to see ‘The Fear of 13’ on Broadway

Timothée Chalamet and Kylie Jenner enjoyed a Broadway outing with her sister Kim Kardashian and her mom, Kris Jenner. The...

Charlize Theron says her daughters will have to earn jobs and their first car

Charlize Theron is making it clear her children won’t be growing up with a Hollywood safety net. During a candid conversation,...

Sharon Stone turns heads with ‘perfect’ unfiltered bikini pic at 68

A rolling Stone gathers no moss. Sharon Stone turned heads with a radiant and unfiltered bikini pic shared to her...

Zayn Malik cancels sellout US tour dates amid health struggles

Zayn Malik has shocked fans by announcing the cancellation of his latest sellout tour. In an announcement made on Friday,...

Tara Lipinski’s surrogate suffers ‘devastating loss’

Tara Lipinski revealed that her surrogate experienced a pregnancy loss while carrying her and husband Todd Kapostasy’s second child. “A few...

Author Cheryl Strayed reveals husband Brian Lindstrom has ‘serious, fatal illness’

Author Cheryl Strayed has revealed that her husband, filmmaker Brian Lindstrom, has been diagnosed with a “serious, fatal illness.” The...

Patriots QB Drake Maye finally breaks silence on Mike Vrabel, Dianna Russini photo scandal

New England Patriots quarterback Drake Maye has finally broken his silence on coach Mike Vrabel’s photo scandal with sports reporter...

Keke Palmer, Zara Larsson, Teyana Taylor and more

The Billboard Women in Music Awards 2026 took place in Los Angeles Wednesday night and honored Teyana Taylor with the...

What Rita Wilson told Tom Hanks after she was diagnosed with breast cancer

Rita Wilson told Tom Hanks after her breast cancer diagnosis in 2015 that if she were to die, she wanted...

King Charles’ personal gift to President Trump carries deep historical message

King Charles’ gift to President Donald Trump holds a deeply historical significance regarding relations between the US and the British...

Taylor Swift breaks cover for NYC dinner outing with dad Scott ahead of Travis Kelce wedding

Taylor Swift broke cover to have an outing with her dad, Scott Swift, and her best friend, Ashley Avignone, ahead...

Inside Demi Lovato’s celebration for her MSG show

Demi Lovato celebrated her sold out show at Madison Square Garden for her “It’s Not That Deep Tour” singing along...

Sally Field refused this iconic role, reveals it was never her ‘cup of tea’

Sally Field almost landed a role in one of the most iconic movies in history and is finally revealing why she ended...

Jennifer Lopez, 56, shows off washboard abs following early morning gym session

Jennifer Lopez is flaunting her impressive abs with sultry selfies. The 56-year-old entertainer posted pics of herself during an early...

Why Golden Globe-winning actress Ann Jillian quit Hollywood at her career peak

Ann Jillian went from a Disney child star to a ’80s sitcom favorite, even portraying her own battle with cancer...

Al Pacino and Beverly D’Angelo reunite for his 86th birthday

Al Pacino and Beverly D’Angelo may have split in 2004, but that didn’t stop them from celebrating the “Godfather” star’s...

‘Wicked’ star Marissa Bode claims she was denied boarding Southern Airways flight over her wheelchair

“Wicked” actress Marissa Bode claimed she was denied boarding her Southern Airways flight because she uses a wheelchair — and...

Kaia Gerber launches second Vuori workout wear collaboration

Page Six may be compensated and/or receive an affiliate commission if you click or buy through our links. Featured pricing...

Dianna Russini deletes X account

Diana Russini deleted her X account (formerly known as Twitter) as drama surrounding photos of herself with Patriots head coach...

Victoria and David Beckham don matching blue outfits for NYC dinner and more star snaps

Bethenny Frankel hits the beach, Dakota Johnson and Role Model keep close on a stroll and more snaps…

Ciara Miller and Maura Higgins join ‘Dancing With the Stars’ Season 35

“Dancing With the Stars” has officially been renewed for a 35th season this fall, with two reality stars heading up...

from Joey King to Kaia Gerber

Jacob Elordi doesn’t have much in common with his volatile character in “Euphoria” — aside from his success with the...

Mike Vrabel dodges questions about Dianna Russini’s resignation

Mike Vrabel dodged multiple questions off-camera pertaining to the Dianna Russini photo scandal following his lengthy statement on the matter....

Ted Danson says Bill Clinton grilled him about his ‘intentions’ with Mary Steenburgen using Secret Service

Ted Danson’s early romance with Mary Steenburgen included a high-stakes moment at the White House. Danson, 78, recalled an intense one-on-one with...

Aidan Quinn reveals why he’s really not in ‘Practical Magic 2’

“Practical Magic” star Aidan Quinn said he wasn’t asked to be part of the sequel. Quinn, 67, played detective Gary...

Jane Seymour continues her 75th birthday celebration with her ‘Dr. Quinn, Medicine Woman’ co-stars

Jane Seymour celebrated her 75th birthday with her former “Dr. Quinn, Medicine Woman” co-stars. Although the actress’ actual birthday is February 15,...

How Meghan Markle’s new candle line gives sweet nod to kids Prince Achie and Princess Lilibet

Meghan Markle’s new collection for her As Ever lifestyle brand includes candles inspired by her and Prince Harry’s children, Prince...

Nicole Kidman recalls learning of mom’s death at Venice Film Festival

Nicole Kidman is opening up about the day she found out her mother had died. The 58-year-old actress spoke at the...

Christina Applegate’s friends fearing the worst as ‘hellish’ details of star’s hospitalization are revealed: report

Christina Applegate’s friends are reportedly worried for the “Married … With Children” star following her recent hospitalization, fearing the worst...

‘Summer House’ stars Amanda Batula and West Wilson kiss, hold hands at Yankees game

Amanda Batula and West Wilson are continuing to put their romance on display. The couple was spotted kissing on live...

Which stars welcomed kids this year

Hollywood’s next generation is here! Page Six is rounding up the celebs who have given birth or adopted babies this...

Christina Applegate hospitalized amid MS battle: report

Christina Applegate is reportedly hospitalized in Los Angeles, according to a report from TMZ. The actress, 54, who has battled...

After Dianna Russini exit, Times staffers slam Athletic’s ‘reflexive’ response to Mike Vrabel photos

The Gray Lady is feeling a little exposed, it seems. Page Six hears that the Dianna Russini-Mike Vrabel scandal has...

‘Hunger Games’ actor arrested for assault with a deadly weapon, intent to kill

“Hunger Games” star Ethan Jamieson was arrested for allegedly assaulting three men with a deadly weapon with the intent to kill....

Katy Perry under investigation by Australian cops after Ruby Rose’s sexual assault allegation

Katy Perry is being investigated by Australian officials over Ruby Rose’s bombshell sexual assault allegation. “Melbourne Sexual Offenses and Child...

Dave Portnoy claims Dianna Russini’s resignation letter makes ‘zero sense’

Dave Portnoy says Dianna Russini’s resignation from the Athletic makes “zero sense” after the sports journalist’s letter announcing her exit...

Country star Ella Langley says ‘very scary’ Alabama church haunted house led to her getting ‘saved again’

Ella Langley is opening up about what it was like for her growing up in a small town as a Southern...

How to watch new ‘Boy Band Confidential’ docuseries for free

Page Six may be compensated and/or receive an affiliate commission if you click or buy through our links. Featured pricing...

‘I Dream of Jeannie’ star Barbara Eden turns heads at 94 in new photo with husband

Barbara Eden turned heads after sharing a new photo that left fans doing a double take. Eden, 94, stunned in a...

No Doubt guitarist Tom Dumont diagnosed with early-onset Parkinson’s disease

No Doubt guitarist Tom Dumont was diagnosed with early-onset Parkinson’s disease “a number of years ago.” The musician shared the...

Sabrina Carpenter apologizes for mistaking fan’s cultural chant with yodeling in awkward Coachella moment

Sabrina Carpenter delivered an apology after she misidentified a fan’s celebratory Arabic call as “yodeling” during her Coachella headlining set...

‘Love on the Spectrum’ stars Abbey Romeo and David Isaacman break silence after split

“Love on the Spectrum” stars Abbey Romeo and David Isaacman have confirmed their split. “Abbey and David spent four and...

Charli D’Amelio, Madelyn Cline, Alix Earle and more

Celebrities braved the threat of dust storms and rain to flock to the desert once again for the 25th edition...

How ex-Prince Andrew is dividing royal family as his two surprising allies are revealed

Princess Anne and Prince Edward are Andrew Mountbatten-Windsor’s remaining allies. The disgraced royal’s siblings have reportedly still been in contact...

Natasha Lyonne attends NYC movie premiere days after being removed from Delta flight

Natasha Lyonne resurfaced at the NYC premiere of “Lorne” just days after she was booted from a Delta Airlines flight....

Cardi B and Stefon Diggs spark reconciliation rumors 2 months after split

She likes it like that. Cardi B and Stefon Diggs sparked reconciliation buzz after the athlete attended his ex’s Little...

‘The Pitt’ star cries as he reveals show helped pay off $80K debt

Patrick Ball broke down in tears while revealing how his role on “The Pitt” paid off his college debt. Ball...

Rod Stewart wife Penny Lancaster says she deserves a medal for marriage to rock legend

After 26 years of partnership and 18 years of marriage to Rod Stewart, Penny Lancaster says she deserves a medal. In a...

Catherine O’Hara ‘wasn’t talking much’ before her tragic death at age 71

Catherine O’Hara’s brother is opening up about the “Schitt’s Creek” star’s final days. On Sunday’s episode of his “Dreams of...

‘Brady Bunch’ star Mike Lookinland says he went ‘fully off the rails’ in his 20s after growing up on hit show

“Brady Bunch” star Mike Lookinland admitted his behavior was “fully off the rails” after finding mega stardom with “The Brady...

Savannah Guthrie returns to ‘Today’ after absence as search continues for missing mom Nancy

Savannah Guthrie returns to the “Today” show after more than two months as the search for her missing mother, Nancy...

Meghan Markle shares a peep at Prince Archie and Princess Lilibet’s Easter festivities

Meghan Markle took to social media on Easter Sunday to share a series of adorable glimpses of her kids enjoying...

Iconic ’90s TV star unrecognizable in sequin skirt and blond wig for new role

David Duchovny looked unrecognizable as he stepped out in a sequin skirt and a blond wig for a forthcoming movie....

Carl Radke and Lindsay Hubbard reunite for Uber Eats

“Summer House” stars Carl Radke and Lindsay Hubbard met up for an emotional reunion while indulging in Uber Eats. The...

Taylor Frankie Paul vacations with her older kids amid Ever custody dispute, domestic violence scandal

Taylor Frankie Paul embarked on a family vacation with her two older kids — after losing temporary custody of her...

Paige DeSorbo, Hannah Berner show support for Ciara Miller after ‘Summer House’ betrayal

Paige DeSorbo and Hannah Berner are throwing their support behind their former “Summer House” co-star Ciara Miller after Amanda Batula...

Vanessa Trump breaks silence on boyfriend Tiger Woods’ DUI arrest

Vanessa Trump subtly addressed her boyfriend Tiger Woods’ DUI arrest via Instagram on Friday. “Love you,” she wrote on her...

Zendaya’s ‘The Drama’ NYC premiere gown is covered in 27 shades of ‘something blue’

And the bride wore something blue. Zendaya arrived at the New York City premiere of “The Drama” on Thursday night...

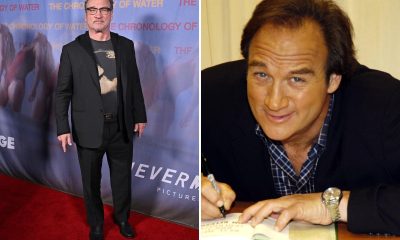

Jim Belushi’s Oregon ranch is his ‘spiritual’ sanctuary with sweat lodge, roaming cattle

Jim Belushi has it all: success in a decades-long career, legions of friends in high and low places, and a...

Brooks Nader and Taron Egerton kiss during LA date night

Brooks Nader and Taron Egerton once again couldn’t keep their hands off each other during a date night in Santa...

Roseanne Barr reveals heart issue, fears she’ll die during surgery

Roseanne Barr received a stark diagnosis — a “damaged” heart — a warning that left her fearing she could “die on...

Kendra Duggar hires lawyer as aunt urges divorce and parents break silence on Joseph arrest

Kendra Duggar reveals she hired her own lawyer in a jail call with husband Joseph as new details emerge in...

Amanda Peet exposes ‘desperation galore’ behind Hollywood fame

Amanda Peet is pulling back the threadbare curtain on life underneath the spotlight. The 54-year-old actress called out Hollywood as nothing...

Charlie Puth details his new album with Page Six Radio

Taylor Swift declared Charlie Puth should be a bigger artist, and now she’s in luck. The musician is releasing new...

Arnold Schwarzenegger’s look-alike son, Joseph Baena, wins first bodybuilding competition

Just call them twins. Arnold Schwarzenegger’s look-alike son Joseph Baena proved the apple doesn’t fall too far from the tree...

Kim Kardashian accused of heavily editing Khloé’s face in photos from Japan trip

Another Photoshop fail? Kim Kardashian was called out by fans for heavily editing her sister Khloé Kardashian’s face in new...

Trending

-

News1 week ago

News1 week agoTrump administration sues UCLA, alleging antisemitic environment festered

-

Trending2 weeks ago

Trending2 weeks agoKnicks made a Donovan Mitchell adjustment the Cavaliers have no answer for

-

Trending1 week ago

Trending1 week agoSpurs’ Mitch Johnson Finishes Third in Coach of the Year Voting

-

Trending2 weeks ago

Trending2 weeks ago‘Lee Cronin’s The Mummy’ Comes to Digital, But When Will ‘The Mummy’ 2026 Be Streaming Free on HBO Max?

-

Trending2 weeks ago

Trending2 weeks agoThe St. Louis Cardinals have an emerging star in Jordan Walker

-

Trending2 weeks ago

Trending2 weeks agoDavid Bednar blows save in ninth as Yankees lose to Mets

-

Trending2 weeks ago

Trending2 weeks agoHow to watch Giants vs. Athletics

-

News6 days ago

Iran War Live Updates: U.S. and Iran Trade Strikes, Further Threatening Negotiations