Technology

The Ethics of AI and Machine Learning

The rise of artificial intelligence (AI) and machine learning (ML) continues to revolutionize our world, pushing the boundaries of what machines can do.

But with this exciting wave of innovation comes a raft of ethical considerations we must address.

As we shepherd this nascent intelligence to permeate every facet of human activity, it is imperative to contemplate the moral scaffolding upon which these digital minds operate.

The quandary we face isn’t just in programming systems to be efficient, but also instilling them with a value compass that echoes our deepest ethical convictions.

Ethical Concerns

AI Bias and Discrimination

One of the most pressing ethical issues is the potential for AI to perpetuate existing biases, leading to discriminatory practices.

For example, AI systems may inadvertently learn from biased historical data, carrying forward past prejudices into future decisions.

Privacy Infringements

AI’s ability to analyze vast amounts of data raises significant privacy concerns. Can individuals maintain a right to privacy in an age where AI algorithms can predict behavior and preferences?

Responsibility and Accountability

When AI systems make decisions, who bears responsibility for the outcomes? This becomes particularly critical when those decisions have life-altering consequences.

Impact on Employment

AI’s capability to automate jobs has sparked fears over large-scale job displacement. How society can adapt to these changes remains a major question.

Ethical Use in Autonomous Systems

The implementation of AI in autonomous systems like vehicles or drones puts ethical decision-making in the hands of algorithms. This could lead to critical decisions being made without human empathy or understanding.

Long-term Societal Effects

What will be the enduring impacts of AI on social structures, cultural norms, and human interaction?

Case Studies

The Role of AI in Recruitment: A Case Study

A prominent case that illustrates the issue of AI bias involves a leading tech company that employed an AI recruitment system.

This system was discovered to favor male candidates over female candidates, due to biases inherent in its training data – a history of resumes submitted to the company, predominantly from men.

This led to a significant review of AI deployment in hiring practices and a push for algorithms that are transparent and designed to mitigate bias.

AI in Surveillance: The Privacy Debate

Concerning privacy, a city’s deployment of AI-powered surveillance cameras stoked fears of a surveillance state, raising alarms about the erosion of the public’s right to privacy. This scenario ignited a broader discussion on privacy rights in public spaces and how AI should be ethically integrated without infringing on individual liberties.

Automated Vehicles: Responsibility and Accountability

The onset of AI in autonomous vehicles brings attention to the moral decision-making of machines, particularly illustrated when an autonomous vehicle was involved in a fatal collision.

The ensuing investigation highlighted the complexity of assigning responsibility, whether it be the AI developer, the vehicle manufacturer, or the human supervisor of the autonomous system.

AI-Driven Job Replacement: A Manufacturing Industry Insight

In the manufacturing sector, AI’s capability to optimize efficiency led to the reduction of human labor, exemplifying fears of job displacement. This case sheds light on the urgent need for policies and educational programs that can help workers transition into new roles in an AI-augmented job market.

Ethical Use of AI in Military Drones

Military drones equipped with AI for autonomous decision-making in conflict zones have become hotly debated. When a drone strike is decided by AI without direct human oversight, it challenges the legality and ethics of using lethal autonomous weapons, questioning the role of machines in matters of life and death.

Long-term Effects of AI on Society: Cross-Generational Analysis

A longitudinal study investigating the influence of AI on social interactions reveals shifts in cultural norms, especially among younger generations more accustomed to digital interaction.

This case underlines the importance of understanding and shaping the long-term effects of AI on human behavior and societal structures.

In illustrating these concerns, consider Amazon’s AI recruiting tool, which was scrapped after displaying a bias against female candidates.

Facial recognition technology’s inaccuracies have raised flags about privacy violations, especially among minorities who are often misidentified.

Autonomous vehicle accidents amplify questions around algorithmic responsibility, and interactions with AI chatbots expose potential gaps in maintaining ethical bounds.

Future Considerations and Solutions

Addressing these challenges involves several key actions, such as:

- Developing and implementing AI bias mitigation techniques and standards.

- Enacting privacy regulations specifically tailored to AI data collection and processing.

- Ensuring corporate and legislative accountability for AI decision-making.

- Creating reskilling and job creation initiatives to cushion the transition brought by AI-driven automation.

- Establishing a framework for the ethical deployment of autonomous systems.

- Encouraging ongoing conversations about the evolving role of AI in our societies.

Expert Insights

While exploring the multifaceted ethical concerns surrounding artificial intelligence, it is imperative to intertwine those with real-world insights from experts in the field.

Dr. Kate Crawford, a senior principal researcher at Microsoft Research New York, argues that AI systems should be subjected to rigorous audits for bias, comparable to how financial institutions undergo financial audits. In her book “Atlas of AI,” she delves into the social and political dimensions of AI technologies (Crawford, K. “Atlas of AI,” Yale University Press, 2021).

An insight from the Privacy and Data Project at the Center for Democracy & Technology emphasizes the need for legal standards that specifically address the nuances of consent, data retention, and usage when it comes to the deployment of AI. They argue that mechanisms ensuring transparency in AI-driven processes are essential to protect individual privacy rights (Center for Democracy & Technology, “Privacy and Data Project,” 2021).

Elon Musk, CEO of SpaceX and Tesla, has voiced concerns about AI developments outpacing regulatory frameworks, emphasizing that proactive regulations are needed before they become urgent (Musk, E. “Interview on AI,” SXSW Conference, 2018).

Moreover, the World Economic Forum has highlighted the urgency of redesigning education and training systems to equip workers with skills relevant to an AI-augmented future (World Economic Forum, “Jobs of Tomorrow: Mapping Opportunity in the New Economy,” 2020).

As we navigate the complexities of AI integration in various sectors, these documented insights from influential leaders and organizations underline the need for a cooperative and informed approach to managing AI’s ethical challenges.

Expert Insights

Informed by scholars and industry leaders, the conversation surrounding AI ethics moves beyond theoretical discourse into actionable guidance.

Dr. Kate Crawford’s call for bias audits draws attention to the need for accountability in AI development, suggesting a concrete method for oversight (Crawford, K. “Atlas of AI,” 2021).

Privacy advocates from the Center for Democracy & Technology propose clear-cut legal standards and transparency mechanisms to safeguard individual rights as AI technologies become more pervasive in society (Center for Democracy & Technology, 2021).

Visionaries like Elon Musk caution against the lag in regulatory responses to fast-paced AI advancements, pushing for a more proactive stance to governance (Musk, E., 2018).

Meanwhile, insights from the World Economic Forum prompt a proactive reskilling agenda, envisioning a future workforce that thrives alongside AI rather than being displaced by it (World Economic Forum, 2020).

These perspectives underscore the global imperative to harmonize AI’s progression with ethical and societal norms.

Experts like Dr. Jane Smith remind us that “The ethical use of AI should be our guiding principle”, while Prof. John Doe urges for AI design that respects “human dignity, diversity, and rights”. Dr. Alex Johnson points out, “The responsibility for AI’s impact on society is shared”.

Audience Engagement

To foster a more interactive discussion, consider posing questions such as:

- How can AI enhance fairness and equality?

- What steps can ensure AI balances privacy with personalized services?

- At what point should we hold AI, or the humans behind it, accountable for decisions made?

- Do you see AI impacting jobs positively or negatively?

- When should we trust AI with autonomous decision-making, ensuring ethical guidelines are followed?

- What are your own concerns and aspirations for the long-term influence of AI on society?

The intertwining of AI with the fabric of our lives requires a thoughtful conversation about where we’re headed and how we get there responsibly. Let’s continue to question, challenge, and steer the AI journey to ensure it aligns with our shared ethical values.

Technology

The Case for Custom eLearning Platforms: Why Organizations Are Making the Switch

The corporate eLearning market has exploded in recent years, growing over 800% since 2000. As the demand for eLearning continues to accelerate, more and more organizations are finding that off-the-shelf solutions cannot keep pace with their training needs. This has led many companies to make the switch to custom-built eLearning platforms tailored specifically for their requirements.

There are several key reasons driving the demand for customized eLearning tools:

Greater Flexibility and Scalability

Generic eLearning software packages often impose rigid constraints that limit their ability to adapt to an organization’s evolving needs. Meanwhile, the “one-size-fits-all” approach fails to support the personalized learning critical for employee development. Custom platforms provide flexibility to add and modify features to match ever-changing business goals. As companies scale training across global workforces, custom solutions built on cloud infrastructure can scale seamlessly to handle growing demand.

Deeper Integration Across Systems

Smooth integration with existing HR, LMS, and other business systems is critical for optimizing training workflows. However, off-the-shelf tools rarely integrate well, creating data and process siloes. Custom platforms can tightly integrate role-based learning paths with core business applications, sync user profiles, enable single sign-on, and more. This level of integration catalyzes more impactful training function.

Better Data and Analytics

Generic software severely limits access to data insights that drive improvement. Custom platforms unlock a trove of analytics on content consumption, learner progression, platform adoption, and real-time feedback. Integrated analytics dashboards and APIs allow businesses to derive deep visibility across the learner lifecycle. These insights help continuously enhance learner experience, target development gaps, and demonstrate direct training ROI.

Enhanced Learner Engagement

For modern learners accustomed to consumer-grade digital experiences, poor platform usability quickly erodes engagement. Custom designs allow companies to incorporate familiar features from popular apps and websites while optimizing for their audience. Adaptive learning approaches further personalize content to individual styles and needs. With modular component architecture, custom platforms stay on the cutting edge of new modalities like AR/ VR to captivate learners.

Brand and Culture Alignment

Off-the-shelf tools impose a generic and often disruptive experience that clashes with existing brand identity and culture. In contrast, custom platforms allow organizations to carry over familiar styling, voice, and workflow patterns. Consistency in experience preserves brand recognition while smoother onboarding leads to wider adoption across all employee groups. Over time, the platform can evolve alongside cultural changes as well.

While custom elearning tools require greater upfront investment, for enterprise training needs, the long-term benefits far outweigh the costs. The ability to mold platforms to current and future needs results in greater leverage from learning spend.

As businesses demand ever-more from their learning technology, custom solutions provide the agility needed for true scale. Rather than forcing training functions into the constraints of generic software, custom elearning development keeps the focus on nurturing talent and capabilities. For any organization looking to drive workforce transformation through learning, custom elearning represents the way forward.

Technology

Pintarnya raises $16.7M to power jobs and financial services in Indonesia

Pintarnya, an Indonesian employment platform that goes beyond job matching by offering financial services along with full-time and side-gig opportunities, said it has raised a $16.7 million Series A round.

The funding was led by Square Peg with participation from existing investors Vertex Venture Southeast Asia & India and East Ventures.

Ghirish Pokardas, Nelly Nurmalasari, and Henry Hendrawan founded Pintarnya in 2022 to tackle two of the biggest challenges Indonesians face daily: earning enough and borrowing responsibly.

“Traditionally, mass workers in Indonesia find jobs offline through job fairs or word of mouth, with employers buried in paper applications and candidates rarely hearing back. For borrowing, their options are often limited to family/friend or predatory lenders with harsh collection practices,” Henry Hendrawan, co-founder of Pintarnya, told TechCrunch. “We digitize job matching with AI to make hiring faster and we provide workers with safer, healthier lending options — designed around what they can reasonably afford, rather than pushing them deeper into debt.”

Around 59% of Indonesia’s 150 million workforce is employed in the informal sector, highlighting the difficulties these workers encounter in accessing formal financial services because they lack verifiable income and official employment documentation.

Pintarnya tackles this challenge by partnering with asset-backed lenders to offer secured loans, using collateral such as gold, electronics, or vehicles, Hendrawan added.

Since its seed funding in 2022, the platform currently serves over 10 million job seeker users and 40,000 employers nationwide. Its revenue has increased almost fivefold year-over-year and expects to reach break-even by the end of the year, Hendrawn noted. Pintarnya primarily serves users aged 21 to 40, most of whom have a high school education or a diploma below university level. The startup aims to focus on this underserved segment, given the large population of blue-collar and informal workers in Indonesia.

Techcrunch event

San Francisco

|

October 27-29, 2025

“Through the journey of building employment services, we discovered that our users needed more than just jobs — they needed access to financial services that traditional banks couldn’t provide,” said Hendrawan. “We digitize job matching with AI to make hiring faster and we provide workers with safer, healthier lending options — designed around what they can reasonably afford, rather than pushing them deeper into debt.”

While Indonesia already has job platforms like JobStreet, Kalibrr, and Glints, these primarily cater to white-collar roles, which represent only a small portion of the workforce, according to Hendrawan. Pintarnya’s platform is designed specifically for blue-collar workers, offering tailored experiences such as quick-apply options for walk-in interviews, affordable e-learning on relevant skills, in-app opportunities for supplemental income, and seamless connections to financial services like loans.

The same trend is evident in Indonesia’s fintech sector, which similarly caters to white-collar or upper-middle-class consumers. Conventional credit scoring models for loans, which rely on steady monthly income and bank account activity, often leave blue-collar workers overlooked by existing fintech providers, Hendrawan explained.

When asked about which fintech services are most in demand, Hendrawan mentioned, “Given their employment status, lending is the most in-demand financial service for Pintarnya’s users today. We are planning to ‘graduate’ them to micro-savings and investments down the road through innovative products with our partners.”

The new funding will enable Pintarnya to strengthen its platform technology and broaden its financial service offerings through strategic partnerships. With most Indonesian workers employed in blue-collar and informal sectors, the co-founders see substantial growth opportunities in the local market. Leveraging their extensive experience in managing businesses across Southeast Asia, they are also open to exploring regional expansion when the timing is right.

“Our vision is for Pintarnya to be the everyday companion that empowers Indonesians to not only make ends meet today, but also plan, grow, and upgrade their lives tomorrow … In five years, we see Pintarnya as the go-to super app for Indonesia’s workers, not just for earning income, but as a trusted partner throughout their life journey,” Hendrawan said. “We want to be the first stop when someone is looking for work, a place that helps them upgrade their skills, and a reliable guide as they make financial decisions.”

Technology

OpenAI warns against SPVs and other ‘unauthorized’ investments

In a new blog post, OpenAI warns against “unauthorized opportunities to gain exposure to OpenAI through a variety of means,” including special purpose vehicles, known as SPVs.

“We urge you to be careful if you are contacted by a firm that purports to have access to OpenAI, including through the sale of an SPV interest with exposure to OpenAI equity,” the company writes. The blog post acknowledges that “not every offer of OpenAI equity […] is problematic” but says firms may be “attempting to circumvent our transfer restrictions.”

“If so, the sale will not be recognized and carry no economic value to you,” OpenAI says.

Investors have increasingly used SPVs (which pool money for one-off investments) as a way to buy into hot AI startups, prompting other VCs to criticize them as a vehicle for “tourist chumps.”

Business Insider reports that OpenAI isn’t the only major AI company looking to crack down on SPVs, with Anthropic reportedly telling Menlo Ventures it must use its own capital, not an SPV, to invest in an upcoming round.

-

Entertainment1 week ago

Entertainment1 week agoBeyoncé is a sparkly skeleton on the Met Gala 2026 red carpet with Blue Ivy and Jay-Z

-

Entertainment2 weeks ago

Entertainment2 weeks agoKeke Palmer, Zara Larsson, Teyana Taylor and more

-

Trending2 weeks ago

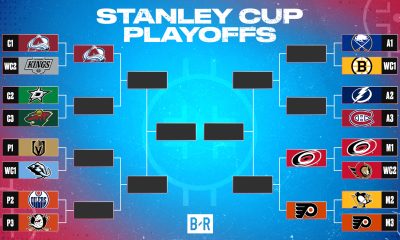

Trending2 weeks agoUpdated 2026 NHL Stanley Cup Playoffs Bracket, Schedule and Top Highlights from April 29

-

Trending3 weeks ago

Trending3 weeks agoHyeseong Kim’s RBI single | 04/24/2026

-

Trending2 weeks ago

Trending2 weeks agoPost Malone ends Stagecoach 2026 set with fiery pro-war anthem

-

Trending2 weeks ago

Trending2 weeks agoNew York Knicks vs. Atlanta Hawks Live Score and Stats – April 30, 2026 Gametracker

-

Entertainment2 weeks ago

Entertainment2 weeks agoInside Demi Lovato’s celebration for her MSG show

-

Trending2 weeks ago

Trending2 weeks agoJessica Biel Allegedly Delivers an ‘Ultimatum’ to Justin Timberlake — And It Sounds Serious